The hum of servers filled the air, a familiar backdrop for the team at CloudSec Solutions. It was early this week, and the news of AWS Security Hub Extended’s general availability had just dropped. The team, still buzzing from a Monday morning briefing, were already diving in, testing the new features.

AWS Security Hub Extended, as per the official announcement, aims to provide a unified, full-stack enterprise security solution. This means bringing together AWS detection services and curated partner solutions. The goal? A single, simplified experience for security teams.

“It’s a game changer,” said Maria Rodriguez, a senior security analyst, as she reviewed the initial setup. “We’ve been waiting for something like this.”

Earlier today, the announcement was met with a mix of excitement and cautious optimism. The market, as a whole, seems ready for this kind of integrated approach. Cloud security, after all, has become increasingly complex.

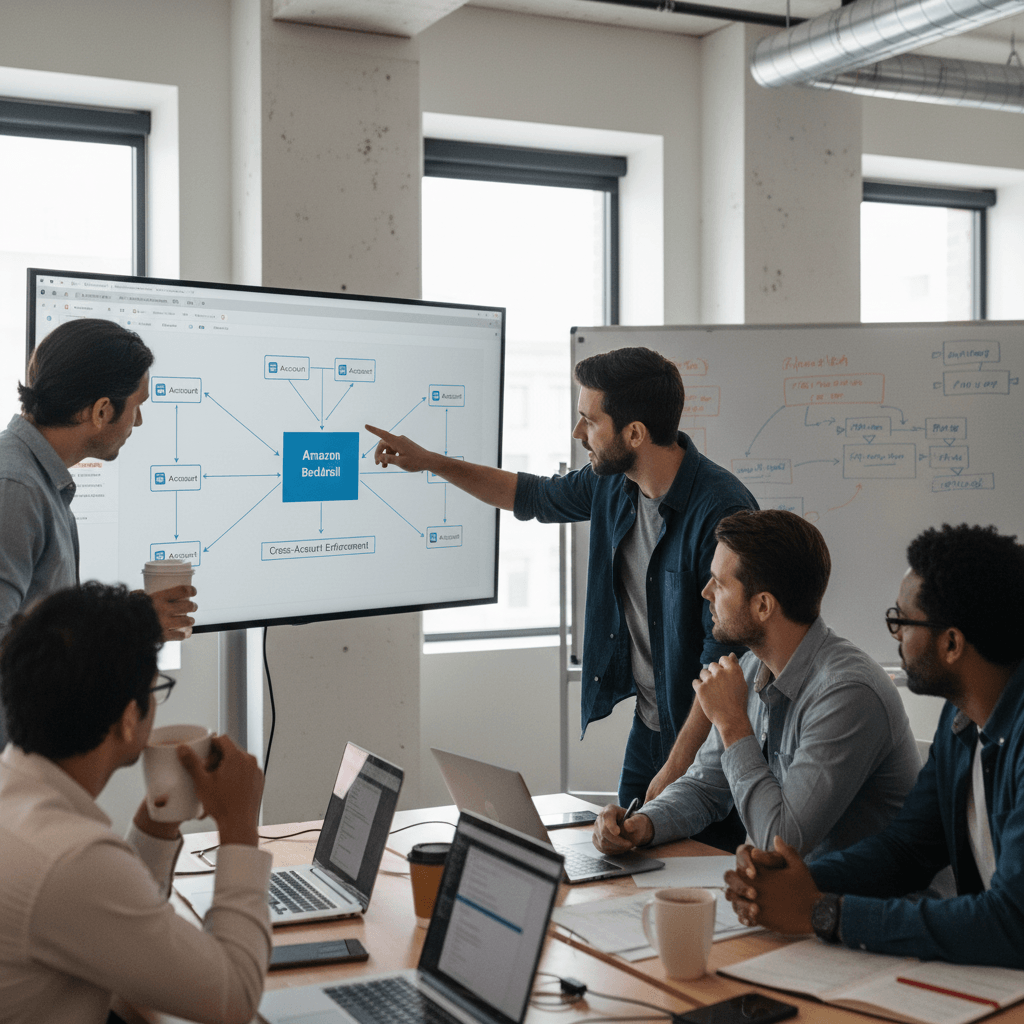

One of the key selling points is the integration of partner solutions. AWS has curated a list of partners whose tools will now work seamlessly within the Security Hub. This includes companies specializing in vulnerability management, threat intelligence, and incident response. This move, analysts believe, will significantly reduce the time security teams spend on integration and management. It’s a bit like having all the tools in one toolbox, finally.

The integration of AWS detection services is another critical component. These services, which include Amazon GuardDuty and Amazon Inspector, provide real-time threat detection and vulnerability scanning. The extended version streamlines access to these services and provides a centralized view of security findings.

The announcement also highlighted the benefits for compliance. Security Hub Extended provides tools to assess and manage compliance with industry standards, such as PCI DSS and CIS benchmarks. This is crucial for organizations operating in regulated industries.

According to a recent report by Gartner, the cloud security market is projected to reach $77.2 billion by 2027. This growth is driven by the increasing adoption of cloud services and the rising number of cyber threats. AWS, with its dominant position in the cloud market, is well-positioned to capitalize on this trend.

Of course, there are challenges. The success of Security Hub Extended will depend on the quality of partner integrations and the ability of AWS to keep pace with evolving threats. Still, the initial response has been overwhelmingly positive. The market seems to be saying, “It’s about time.”

The team at CloudSec Solutions, meanwhile, were already planning their next steps. The goal is to fully integrate the new tools into their existing security infrastructure. It’s a process that will take time, but the potential benefits are clear. A more efficient, more effective, and more comprehensive security posture.

And that, it seems, is what everyone is hoping for.