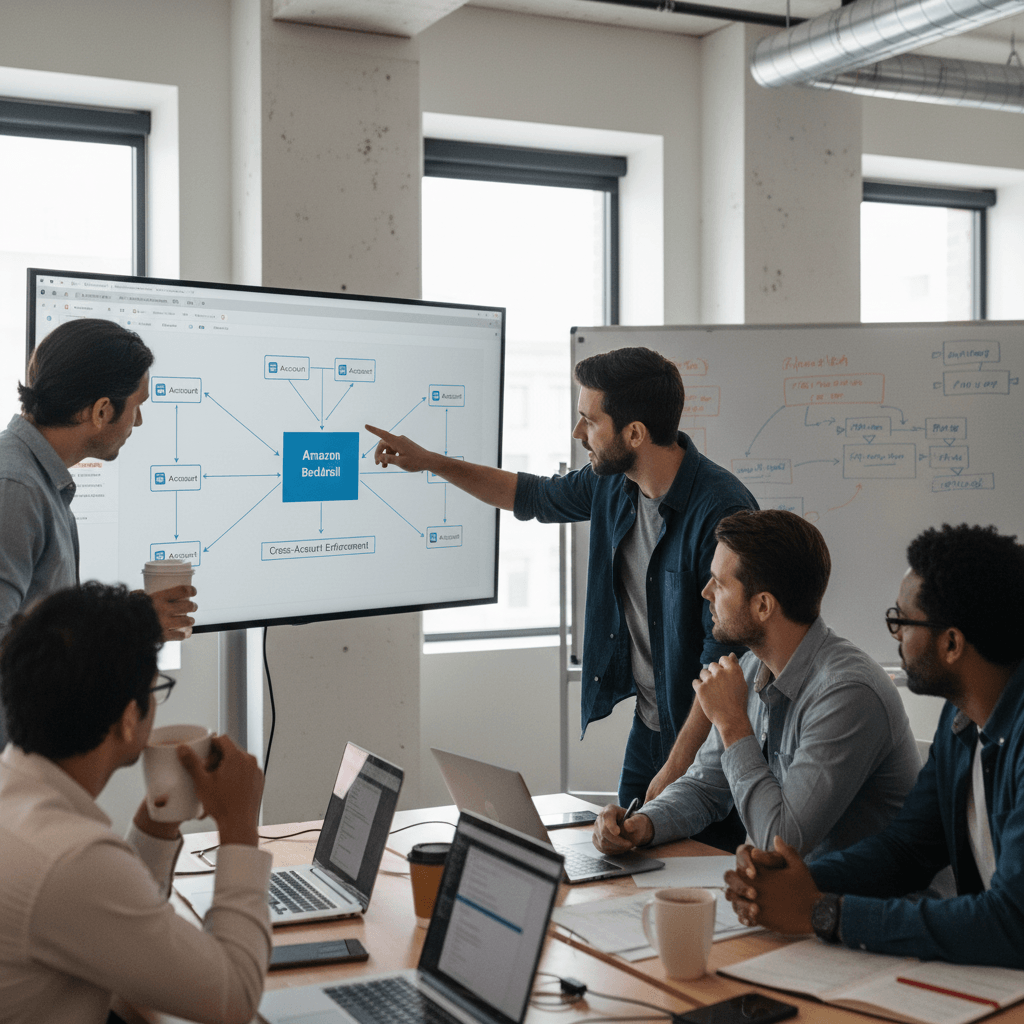

Amazon Bedrock Guardrails now offers cross-account safeguards, enabling centralized enforcement and management of safety controls across multiple AWS accounts within an organization. This enhancement allows users to specify a guardrail in a new Amazon Bedrock policy within the management account of their organization, automatically enforcing configured safeguards across all member entities for every model invocation with Amazon Bedrock.

This organization-wide implementation supports uniform protection across all accounts and generative AI applications with centralized control and management. The capability also offers flexibility to apply account-level and application-specific controls depending on use case requirements, in addition to organizational safeguards.

Organization-level enforcements apply a single guardrail from an organization’s management account to all entities within the organization through policy settings. Account-level enforcement enables automatic enforcement of configured safeguards across all Amazon Bedrock model invocations in an AWS account, applying to all inference API calls.

Users can establish and centrally manage protection through a unified approach. This supports adherence to corporate responsible AI requirements while reducing the administrative burden of monitoring individual accounts and applications. Security teams no longer need to oversee and verify configurations or compliance for each account independently.

To get started with centralized enforcement in Amazon Bedrock Guardrails, users can configure account-level and organization-level enforcement in the Amazon Bedrock Guardrails console. Before configuring enforcement, a guardrail with a specific version needs to be created to ensure immutability. Prerequisites for using the new capability, such as resource-based policies for guardrails, must also be completed.

To enable account-level enforcement, users can select the guardrail and version to automatically apply to all Bedrock inference calls from the account in the specific Region. Users can also configure selective content guarding controls for system prompts and user prompts with either Comprehensive or Selective settings. Comprehensive enforces guardrails on everything, while Selective relies on callers to tag the right content.

After creating the enforcement, users can test and verify it using a role in their account. The account-enforced guardrail should automatically apply to both prompts and outputs. The guardrail response will include enforced guardrail information. Tests can also be conducted by making a Bedrock inference call using InvokeModel, InvokeModelWithResponseStream, Converse, or ConverseStream APIs.

To enable organization-level enforcement, users can go to the AWS Organizations console and enable Bedrock policies. A Bedrock policy can then be created to specify the guardrail and attach it to target accounts or organizational units (OUs). Users can specify their guardrail ARN and version and configure the input tags setting in AWS Organizations.

After creating the policy, it can be attached to desired organizational units, accounts, or the root in the Targets tab. The underlying safeguards within the specified guardrail are automatically enforced for every model inference request across all member entities, ensuring consistent safety controls. Different policies with associated guardrails can be attached to different member entities to accommodate varying requirements.

Key considerations include the ability to choose to include or exclude specific models in Bedrock for inference and to safeguard partial or complete system prompts and input prompts. Accurate guardrail Amazon Resource Names (ARNs) must be specified in the policy to avoid violations and non-enforcement. Automated Reasoning checks are not supported with this capability.

Cross-account safeguards in Amazon Bedrock Guardrails is generally available today in all AWS commercial and GovCloud Regions where Bedrock Guardrails is available. Charges apply to each enforced guardrail according to its configured safeguards.